|

|

|

|

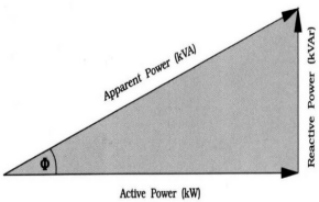

Power Factor Definition: Power factor is the ratio between the kW and the kVA drawn by an electrical load where the kW is the actual load power and the kVA is the apparent load power. It is a measure of how efficiently the current is being converted into useful work output and more particularly is a good indicator of the effect of the load current on the efficiency of the supply system. What causes Power Factor to change? All current drawn in a circuit will cause losses in the supply system. A load with a power factor of unity or 1.0 is the most efficient loading of the supply and a load with a PF of 0.7 or lower increases the losses in the system. A poor power factor is the result of a significant phase difference between the voltage and current caused by the load on the supply, or it can be due to a high harmonic content or a distorted waveform. A distorted current waveform may be caused by variable speed drives, switched mode power supplies, discharge lighting, rectifiers, or other electronic type loads. In 3 phase power supplies the "power" can be measured as a triangle. |

|

|

|

Power Factor is expressed as COS f (The angle between Apparent Power and Active power) |

|

How does Power Factor Correction work? Capacitive Power Factor correction (PFC) is applied to electric circuits as a means of minimising the inductive component of the current and thereby reducing the losses in the supply. The capital cost is usually recovered in less than 1 year. |

| [kwsaving home] [Power Factor Correction] [Technical Description] [Simple Description] [Motor optimiser] [Energy Saving Lighting] [Soft Starters] [Sub Metering] [Energy Monitoring] [NEWS] |

|

AJM Energy e-mail:info@kwsaving.co.uk From Outside Great Britain :-

|

|||